Chart abstraction is the unglamorous backbone of nearly every quality program in healthcare. A trained abstractor opens a record, reads the report, decides whether the indication on the order matches the procedure performed, checks the impression against the findings, notes whether the report addressed the questions raised by the referring clinician, and logs the result against a measure definition. Published estimates for this kind of work typically run on the order of 55 to 75 minutes per case for mature measure sets, with substantial variation by case complexity, measure breadth, and abstractor training.

Multiply that by application sample sizes, three to ten cases per application for many modality-level programs, hundreds to thousands of cases for registry-grade work, and the labor model becomes the binding constraint on how much evidence a program can afford to compile. The cost is paid twice: once by the program, in staff hours that might otherwise go to clinical care, and once by the field, in measures that get narrower, sample sizes that get smaller, and quality questions that go unasked because the labor to answer them does not exist.

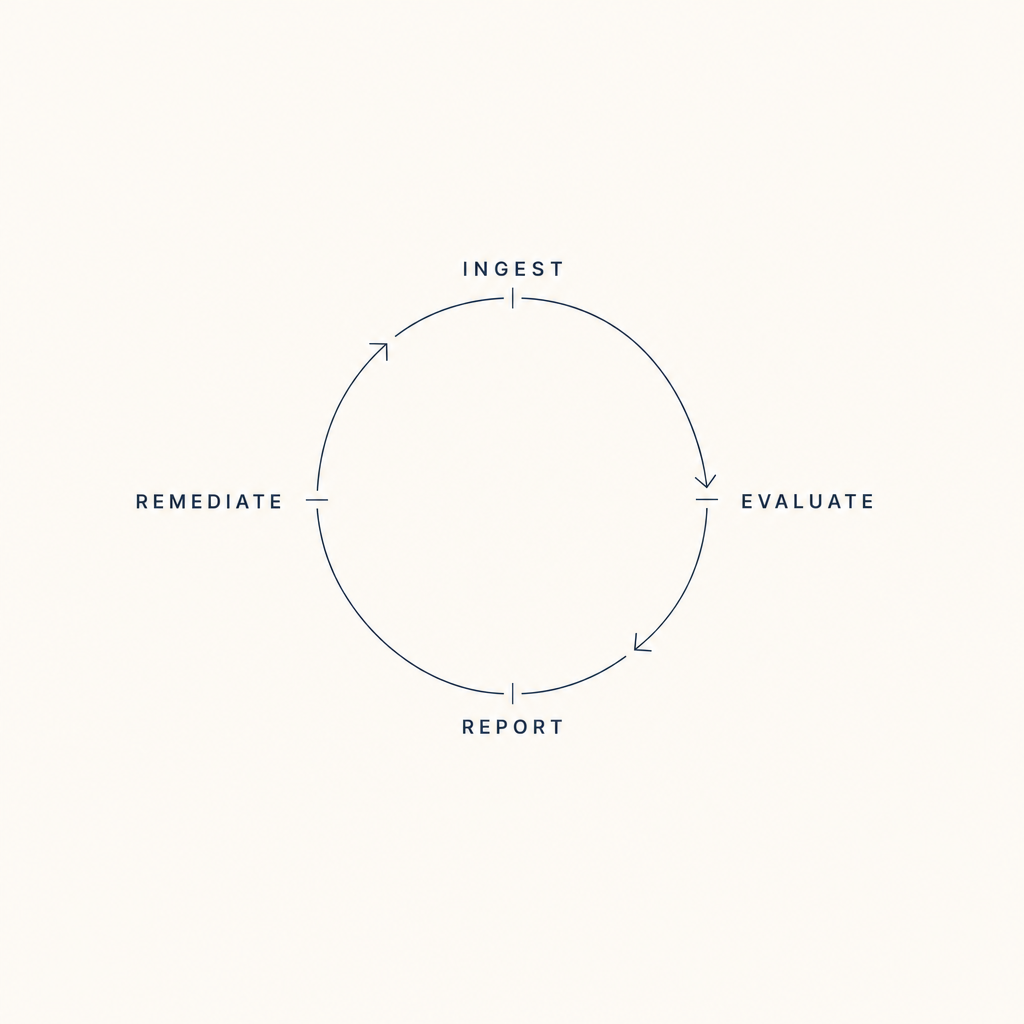

FHIR-native ingest changes the binding constraint. When indications, impressions, finalized reports, signing physicians, and equipment service events are pulled directly from clinical systems as structured data, the abstraction step does not disappear, clinical nuance still requires human judgment on sampled cases, but the completeness check, the volume tally, the credential currency check, and the timeliness measurement all become computed rather than abstracted. The expensive labor gets re-routed to the work it was meant to do.

How FHIR-native ingest changes the labor model →