The thesis

The Standards already describe the work. The substrate is what watches it.

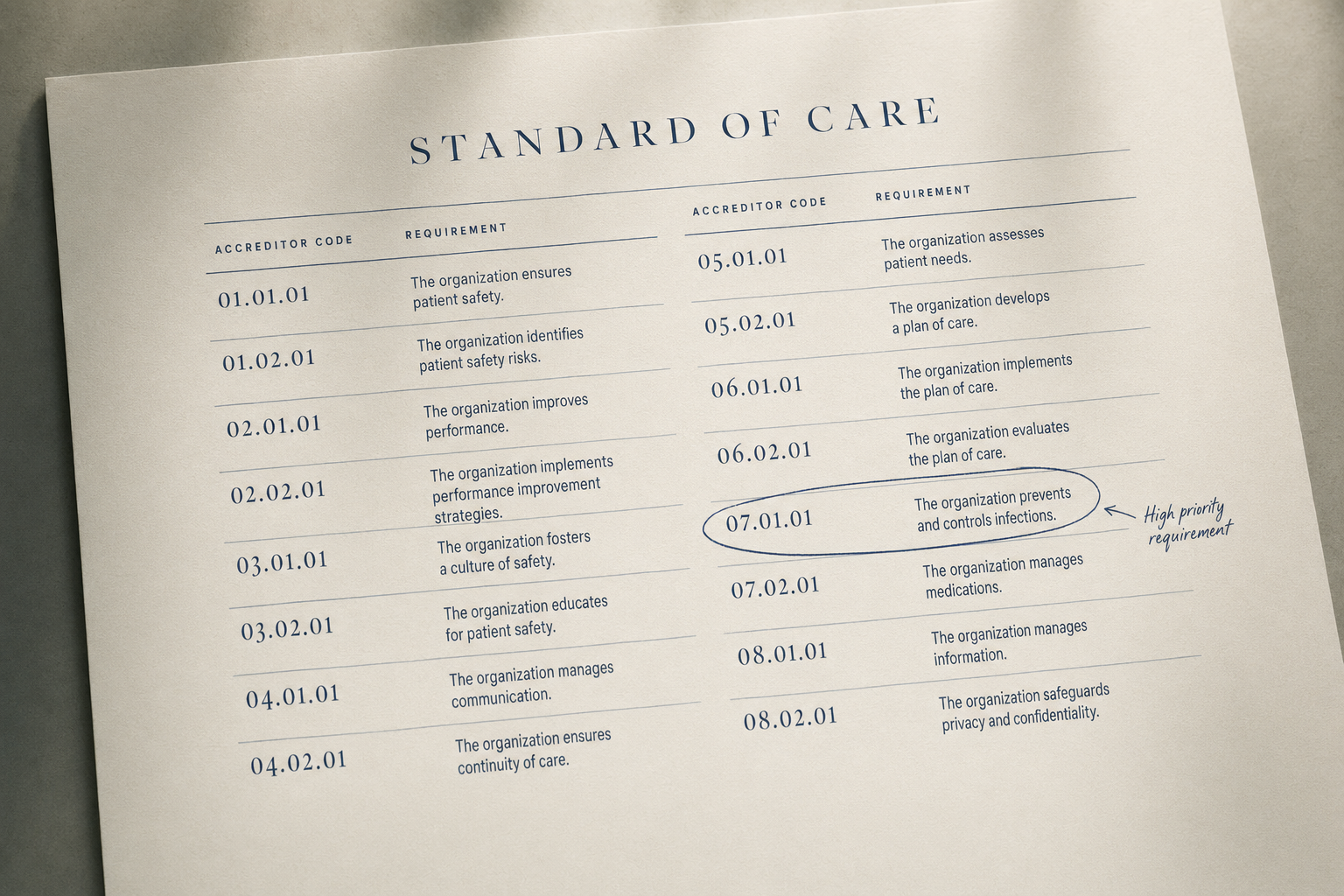

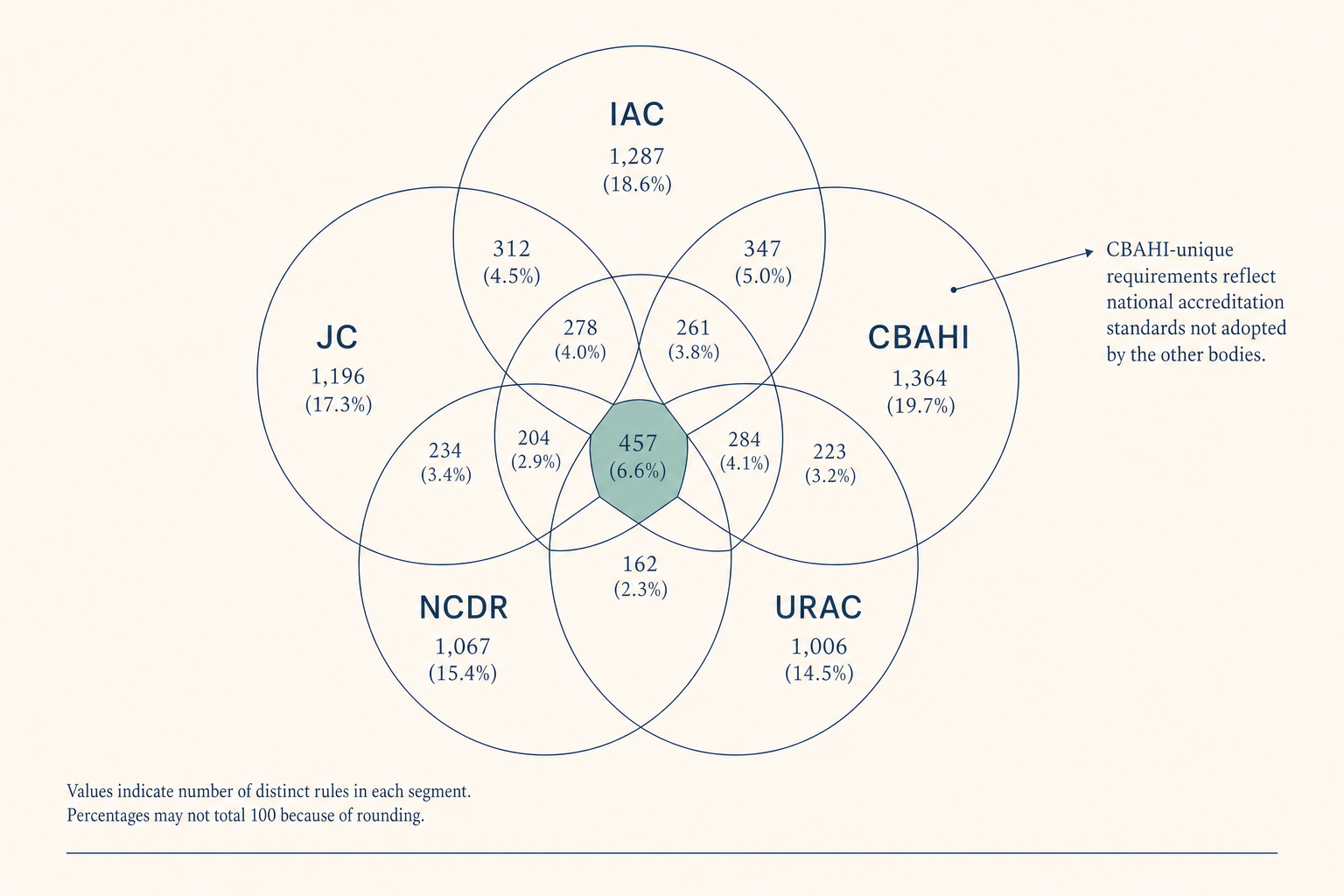

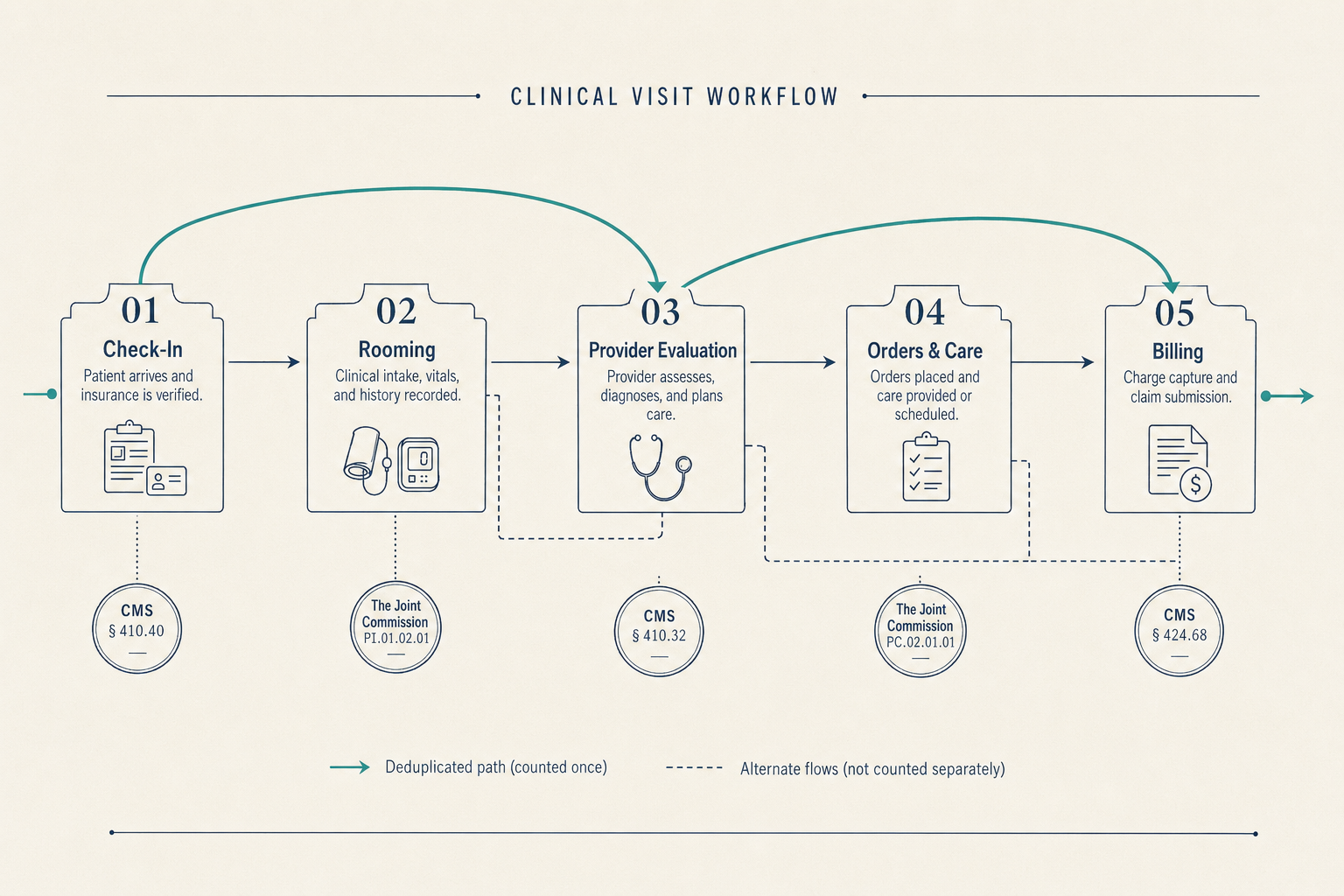

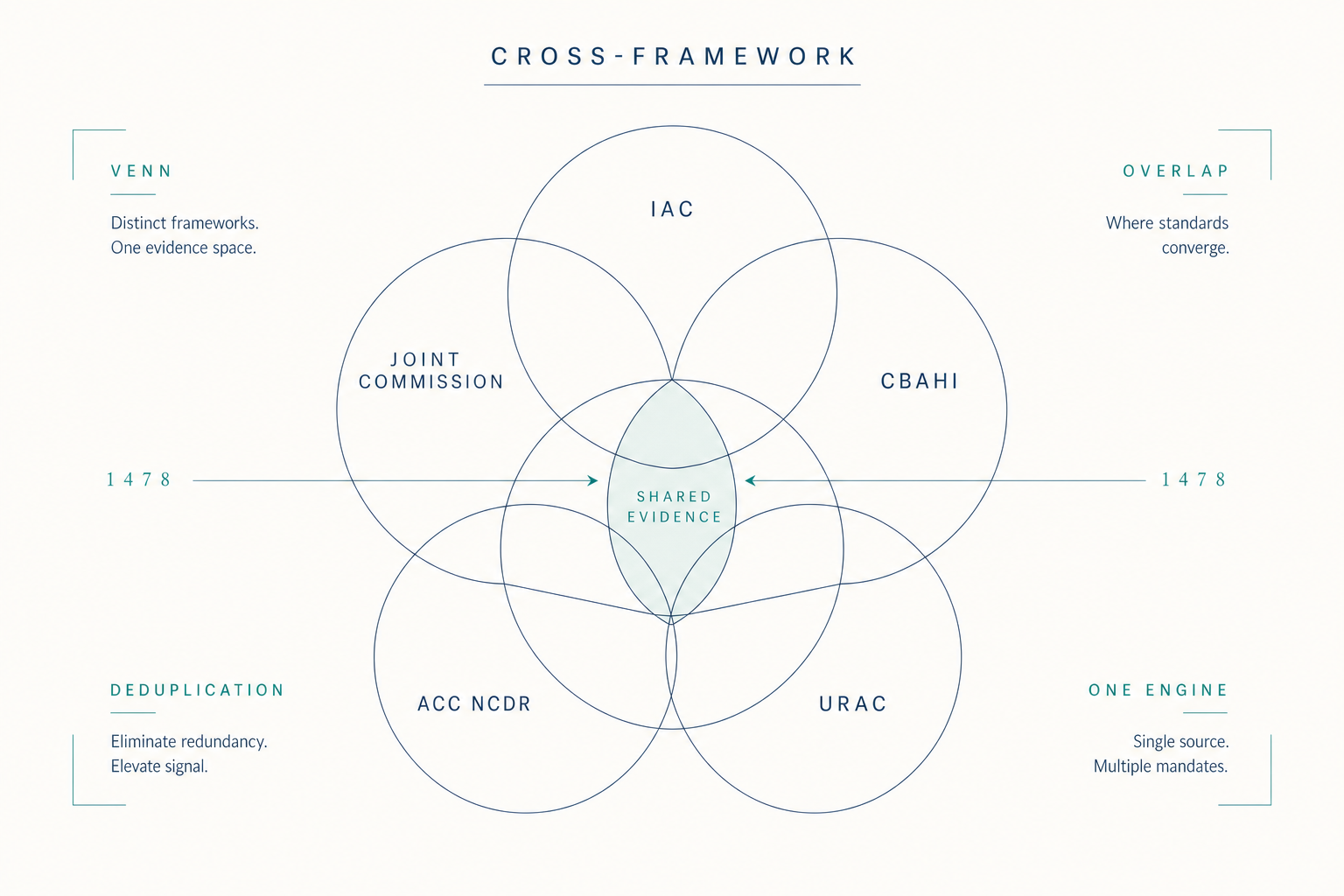

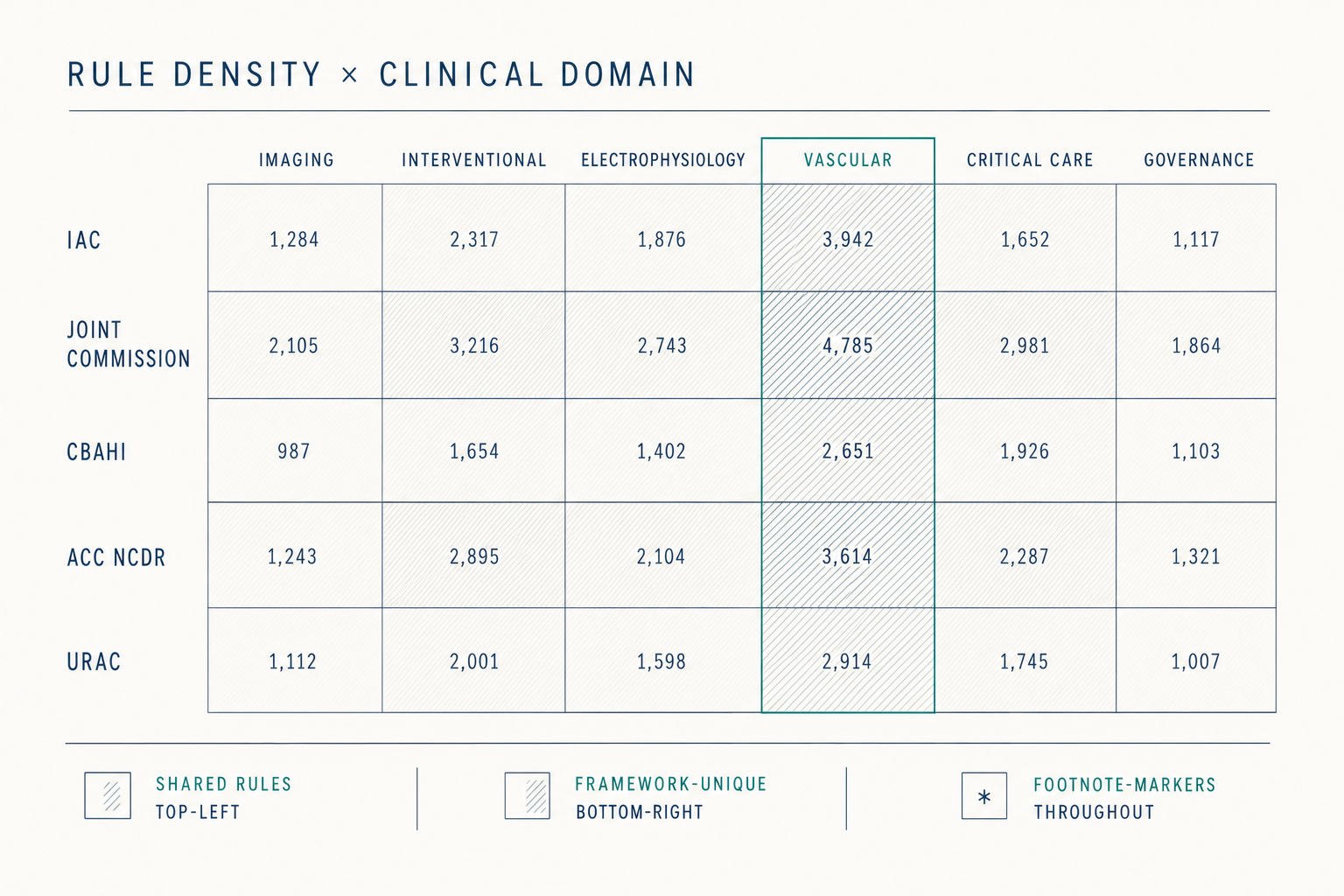

The eight scenarios describe different surfaces of the same structure. The Standards say what the evidence must show, appropriate use, technical quality, interpretive quality, report completeness and timeliness, credentialing currency, equipment maintenance, volumes met. The evidence is in the clinical data already. What is missing is the layer that watches that evidence accumulate against those Standards on a continuous basis.

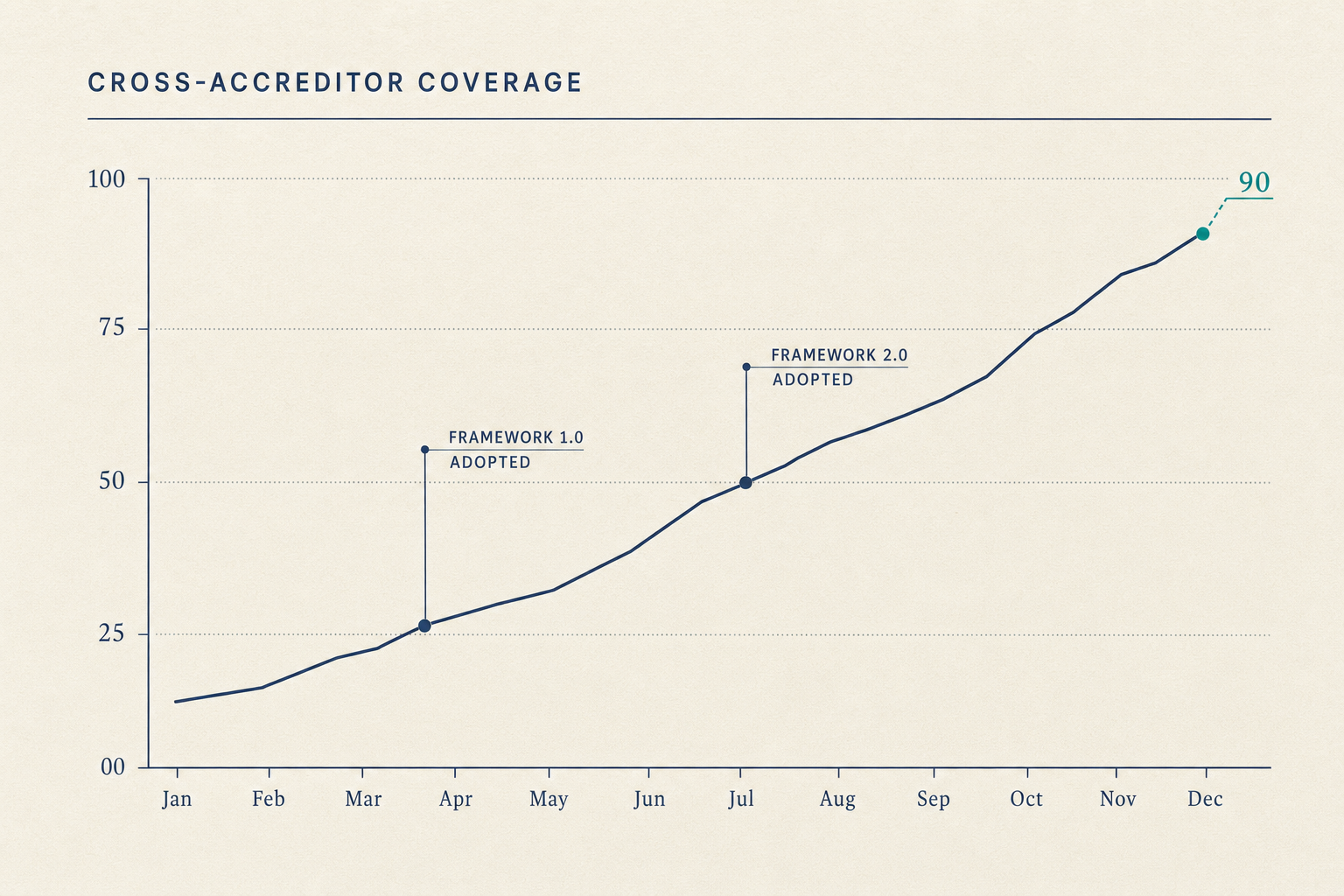

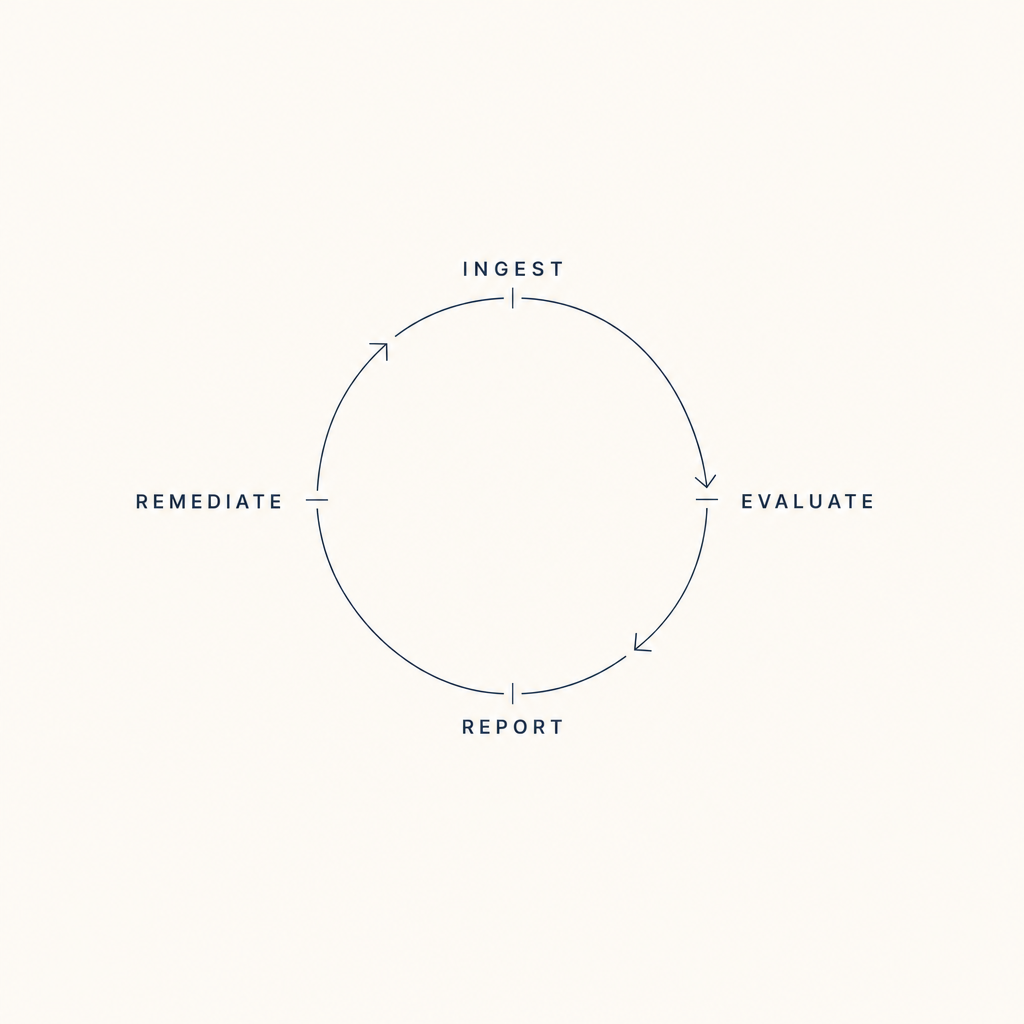

Continuous visibility, structured sampling, encoded matching, pre-validated packages, structured equipment data, configuration-release standards, tracked remediation, captured QI artifacts, these are not eight products. They are eight surfaces of one substrate. The work shrinks because the substrate watches the evidence the Standards already describe.

Figure 4.1, Eight scenarios across the cycle, on one substrate